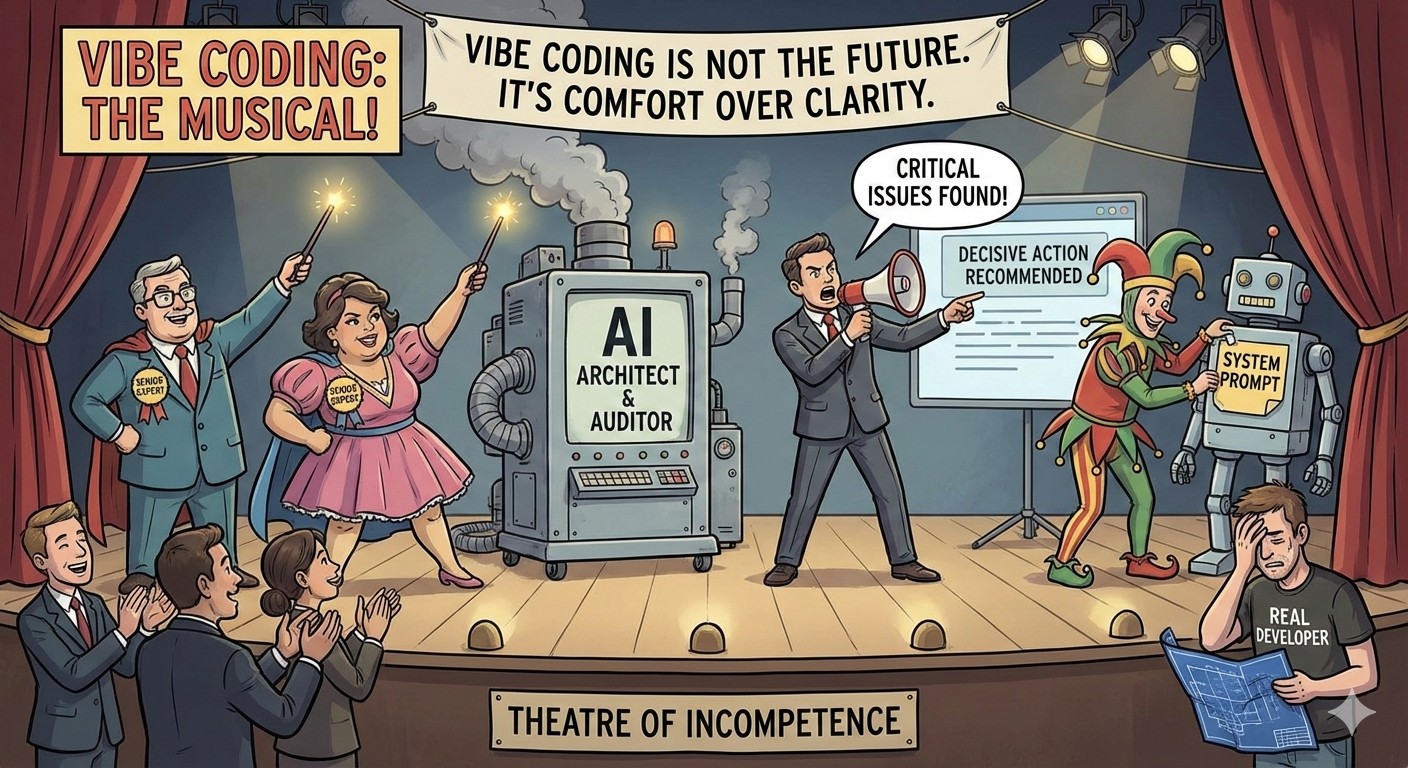

Vibe coding IS NOT the FUTURE OF SOFTWARE

It’s what happens when incompetence finds its voice

You see it everywhere. Someone spins up a few AI agents, pastes in a system prompt that says “you are a senior expert”, runs a scan, and suddenly the project is “full of critical issues”. Not because the system is broken, but because confident language showed up on the screen

Most of these prompts don’t ask the model to think. They ask it to sound right. So the output ends up being familiar patterns, familiar warnings, familiar conclusions. It feels professional, complete. And it’s usually shallow as hell

This fits the incentives perfectly. AI companies don’t get rewarded for hesitation or nuance. They get rewarded for answers that feel decisive. The louder the conclusion, the more useful it seems. And vibe coding is just that logic applied to everything

The result is a lot of activity and very little understanding. Tickets get filed. Docs get written. “Findings” get escalated. Almost nobody stops to ask where authority actually lives in the system, which layer enforces what, or whether the conclusion even maps to reality

That’s how AI “audits” turn into theatre. Everyone is busy, everyone feels productive, and nothing meaningful is being reasoned about. When you remove the human who understands the system from the loop, confidence fills the gap

This isn’t an argument against using AI to write code. Used by an actual developer, models are genuinely useful. They speed things up, compress knowledge, and reduce friction when you already know what you’re doing and why

The problem starts when that same tool gets used outside that context. As soon as models become architects, auditors, or sources of truth, they stop being accelerators and turn into toys. The loud ones.

AI can help engineers move faster. It can’t supply judgement for people who never built it in the first place.

Vibe coding doesn’t make teams faster. It makes them comfortable. And comfort is exactly how real problems slip by unnoticed

Let’s talk clarity